With the recent news regarding the availability of Docker on Windows Server 2016, it is a good time to talk about using Docker in Windows-based environments. While it isn’t recommended to install and use the Docker for Windows tool on production servers, it is a really nice tool for local development.

There are a number blog posts that describe the process of getting Docker up and running in various ways. I have found Docker for Windows the easiest to get up and running.

Assuming that you have the Windows 10th Anniversary update installed, you can go grab the installer here. Make sure to download the beta channel to gain access to the ability to switch between Windows and Linux-based containers, which was introduced in Beta26.

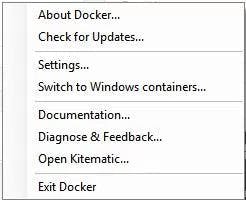

After you have run through the installer, everything should get configured automatically and you should be up and running. By default, your Docker client will be configured to use Linux containers.

The first time you switch to Windows containers you might need to reboot your machine. But anytime after the first reboot, the transition between container architectures should be seamless. Just be aware that when you are using Linux containers you will only be able to use Linux-based containers and likewise when using Windows containers. So for example, if you select the option to “Switch to Windows containers…” then you should be able to pull and run a Windows container.

> docker pull microsoft/nanoserver

If the pull worked, then the Windows container functionality should be working. There is a nice blog post that discusses in detail what is going on behind the scenes when switching between Linux and Windows containers if you are interested.

Practical Container Examples

Now that we have covered the basics of how to run a Windows-based container, let’s talk about some advantages of Docker and then look at a few examples for Linux-based containers.

There are some nice advantages of running commands via Docker. First, you don’t need to worry about gathering and installing any extra dependencies or files on your system, cluttering up the system and leading to potential problems in the future. Be aware, though, some Docker images can be quite large, so when selecting your image, be sure to note the image size. The second advantage is that you have a nice easy way to use Linux tooling without running a more heavyweight VM to get access to the tools you need. This makes context switching a lot smoother and seamless.

Say that you are working in a Windows environment but are comfortable using Linux-based tools. You can easily run a container (when the Docker for Windows client is set) to run Linux containers.

There are often times when an admin needs to check if a port is open and listening. Installing and using telnet is a possibility, but the Linux Netcat utility is usually a de facto choice when looking at ports. Netcat can be used with Docker on your Windows machine with no additional software installation or configuration. To test the Netcat example, make sure to switch Docker to use Linux containers. Again, you can verify by running the “docker version” command. Then run the following command.

> docker run --rm appropriate/nc -tv google.com 80

Or to get the Netcat command to simply spit out help options you can run the following.

> docker run --rm appropriate/nc

Another good Docker use case might include testing software developed to run on Linux that you otherwise wouldn’t be able to test out on Windows. For example, there are a number of different Nagios Docker images available, which can simply be pulled and run to quickly test out features and functionality.

Not only is Nagios notoriously difficult to install and configure but without Docker it would take another server or machine to run Linux just to be able to run Nagios. Using Docker drastically reduces the time and effort to get up and running. To run a Nagios container, run the following command.

> docker run -d -p 25:25 -p 80:80 quantumobject/docker-nagios

Then to login navigate to localhost/Nagios in a browser. The username is “nagiosadmin” and the password should be “admin”. When you’re done testing, you can kill and remove the container.

One last example might include times that you might simply want to drop into a bash prompt inside of a Docker container to test out some commands. Having the flexibility to run commands and test out different Linux tools locally is really handy to have.

Conclusion

Having the ability to easily run Linux commands on Windows with the Docker engine is a nice little trick that can come in handy for administrators that quickly need access Linux, but don’t want to run a VM or otherwise login to a Linux machine.

Docker offers a lot more flexibility than the examples given but, hopefully, this post gets readers thinking about some potential use cases of their own. If you haven’t already, this should give the Windows folks more of an excuse to at least start playing around with and learning Docker.

Docker for Windows takes away almost all of the pain of running containers on Windows and gives admins and engineers easy access to start playing. So, if you haven’t checked Docker out yet, playing with it locally is a great way to explore and see some of the benefits it has to offer. And if you are on the fence about its place in the Windows world, maybe you will be persuaded to give Docker more of a chance in the future.

BECOME A DEVOPS PRO

FREE DEVOPS COURSE

- Docker Tips and Tricks

- Agile Methodologies

- Documentation and Tools Hacks

- Containerization vs Virtualization